1. Bubble Sort

Bubble sort repeatedly compares and swaps(if needed) adjacent elements in every pass. In i-th pass of Bubble Sort (ascending order), last (i-1) elements are already sorted, and i-th largest element is placed at (N-i)-th position, i.e. i-th last position.

Algorithm:

Optimization of Algorithm: Check if there happened any swapping operation in the inner loop (pass execution loop) or not. If there is no swapping in any pass, it means the array is now fully sorted, hence no need to continue, stop the sorting operation. So we can optimize the number of passes when the array gets sorted before the completion of all passes. And it can also detect if the given / input array is sorted or not, in the first pass.

Time Complexity:

- Best Case Sorted array as input. Or almost all elements are in proper place. [ O(N) ]. O(1) swaps.

- Worst Case: Reversely sorted / Very few elements are in proper place. [ O(N2) ] . O(N2) swaps.

- Average Case: [ O(N2) ] . O(N2) swaps.

Space Complexity: A temporary variable is used in swapping [ auxiliary, O(1) ]. Hence it is In-Place sort.

Advantage:

- It is the simplest sorting approach.

- Best case complexity is of O(N) [for optimized approach] while the array is sorted.

- Using optimized approach, it can detect already sorted array in first pass with time complexity of O(1).

- Stable sort: does not change the relative order of elements with equal keys.

- In-Place sort.

Disadvantage:

- Bubble sort is comparatively slower algorithm.

2. Selection Sort

Selection sort selects i-th smallest element and places at i-th position. This algorithm divides the array into two parts: sorted (left) and unsorted (right) subarray. It selects the smallest element from unsorted subarray and places in the first position of that subarray (ascending order). It repeatedly selects the next smallest element.

Algorithm:

Time Complexity:

- Best Case [ O(N2) ]. Also O(N) swaps.

- Worst Case: Reversely sorted, and when inner loop makes maximum comparison. [ O(N2) ] . Also O(N) swaps.

- Average Case: [ O(N2) ] . Also O(N) swaps.

Space Complexity: [ auxiliary, O(1) ]. In-Place sort.

Advantage:

- It can also be used on list structures that make add and remove efficient, such as a linked list. Just remove the smallest element of unsorted part and end at the end of sorted part.

- Best case complexity is of O(N) while the array is already sorted.

- Number of swaps reduced. O(N) swaps in all cases.

- In-Place sort.

Disadvantage:

- Time complexity in all cases is O(N2), no best case scenario.

3. Insertion Sort

Insertion Sort is a simple comparison based sorting algorithm. It inserts every array element into its proper position. In i-th iteration, previous (i-1) elements (i.e. subarray Arr[1:(i-1)]) are already sorted, and the i-th element (Arr[i]) is inserted into its proper place in the previously sorted subarray.

Find more details in this GFG Link.

Find more details in this GFG Link.

Algorithm:

Time Complexity:

- Best Case Sorted array as input, [ O(N) ]. And O(1) swaps.

- Worst Case: Reversely sorted, and when inner loop makes maximum comparison, [ O(N2) ] . And O(N2) swaps.

- Average Case: [ O(N2) ] . And O(N2) swaps.

Space Complexity: [ auxiliary, O(1) ]. In-Place sort.

Advantage:

- It can be easily computed.

- Best case complexity is of O(N) while the array is already sorted.

- Number of swaps reduced than bubble sort.

- For smaller values of N, insertion sort performs efficiently like other quadratic sorting algorithms.

- Stable sort.

- Adaptive: total number of steps is reduced for partially sorted array.

- In-Place sort.

Disadvantage:

- It is generally used when the value of N is small. For larger values of N, it is inefficient.

Time and Space Complexity:

| Sorting Algorithm | Time Complexity | Space Complexity | ||

|---|---|---|---|---|

| Best Case | Average Case | Worst Case | Worst Case | |

| Bubble Sort | O(N) | O(N2) | O(N2) | O(1) |

| Selection Sort | O(N2) | O(N2) | O(N2) | O(1) |

| Insertion Sort | O(N) | O(N2) | O(N2) | O(1) |

Recommended Posts:

If you like GeeksforGeeks and would like to contribute, you can also write an article using contribute.geeksforgeeks.org or mail your article to [email protected]. See your article appearing on the GeeksforGeeks main page and help other Geeks.

Please Improve this article if you find anything incorrect by clicking on the 'Improve Article' button below.

$begingroup$

It is written on Wikipedia that '.. selection sort almost always outperforms bubble sort and gnome sort.' Can anybody please explain to me why is selection sort considered faster than bubble sort even though both of them have:

- Worst case time complexity: $mathcal O(n^2)$

- Number of comparisons: $mathcal O(n^2)$

- Best case time complexity :

- Bubble sort: $mathcal O(n)$

- Selection sort: $mathcal O(n^2)$

- Average case time complexity :I was with him because he was nice to me. It was then I realised that I can't be with such a person. One of the evening, during one of our verbal fights and his drunken stupor, he smashed my head with a glass ashtray and I fainted. Boy next door gachi. I thought I was bi then, Sounds familiar rite?Things when downhill from thereon and I realised that he became a different person whenever he was drunk.

- Bubble sort: $mathcal O(n^2)$

- Selection sort: $mathcal O(n^2)$

16.4k55 gold badges4343 silver badges9393 bronze badges

RYORYO

$endgroup$3 Answers

$begingroup$All complexities you provided are true, however they are given in Big O notation, so all additive values and constants are omitted.

To answer your question we need to focus on a detailed analysis of those two algorithms. This analysis can be done by hand, or found in many books. I'll use results from Knuth's Art of Computer Programming.

Average number of comparisons:

- Bubble sort: $frac{1}{2}(N^2-Nln N -(gamma+ln2 -1)N) +mathcal O(sqrt N)$

- Insertion sort: $frac{1}{4}(N^2-N) + N - H_N$

- Selection sort: $(N+1)H_N - 2N$

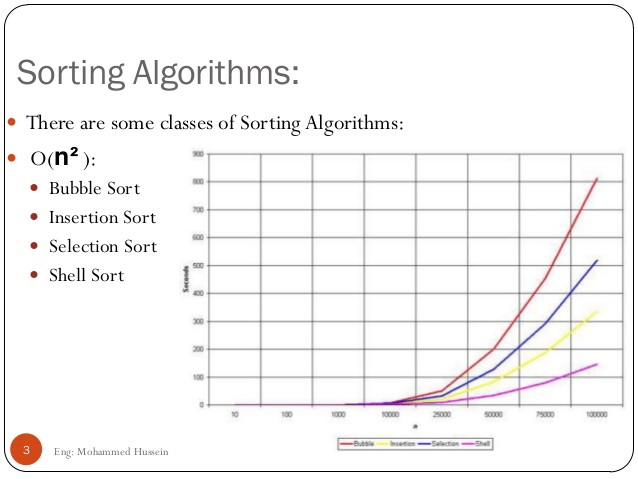

Now, if you plot those functions you get something like this:

As you can see, bubble sort is much worse as the number of elements increases, even though both sorting methods have the same asymptotic complexity.

This analysis is based on the assumption that the input is random - which might not be true all the time. However, before we start sorting we can randomly permute the input sequence (using any method) to obtain the average case.

I omitted time complexity analysis because it depends on implementation, but similar methods can be used.

Bartosz PrzybylskiBartosz Przybylski1,44711 gold badge99 silver badges1919 bronze badges

$endgroup$$begingroup$The asymptotic cost, or $mathcal O$-notation, describes the limiting behaviour of a function as its argument tends to infinity, i.e. its growth rate.

The function itself, e.g. the number of comparisons and/or swaps, may be different for two algorithms with the same asymptotic cost, provided they grow with the same rate.

More specifically, Bubble sort requires, on average, $n/4$ swaps per entry (each entry is moved element-wise from its initial position to its final position, and each swap involves two entries), while Selection sort requires only $1$ (once the minimum/maximum has been found, it is swapped once to the end of the array).

Selection Sort Vs Bubble Sort Table

In terms of the number of comparisons, Bubble sort requires $ktimes n$ comparisons, where $k$ is the maximum distance between an entry's initial position and its final position, which is usually larger than $n/2$ for uniformly distributed initial values. Selection sort, however, always requires $(n-1)times(n-2)/2$ comparisons.

Ddelnmu ma result 2015. In summary, the asymptotic limit gives you a good feel for how the costs of an algorithm grow with respect to the input size, but says nothing about the relative performance of different algorithms within the same set.

PedroPedro

Selection Sort And Bubble Sort

$endgroup$$begingroup$Bubble sort uses more swap times, while selection sort avoids this.

When using selecting sort it swaps

n times at most. but when using bubble sort, it swaps almost n*(n-1). And obviously reading time is less than writing time even in memory. The compare time and other running time can be ignored. So swap times is the critical bottleneck of the problem. simonmysunsimonmysun

$endgroup$Not the answer you're looking for? Browse other questions tagged algorithmsruntime-analysisefficiencysorting or ask your own question.

Insertion sort

Insertion sort is a simple sorting algorithm with quadratic worst-case time complexity,but in some cases it’s still the algorithm of choice.

- It’s efficient for small data sets.It typically outperforms other simple quadratic algorithms,such as selection sort or bubble sort.

- It’s adaptive: it sorts data sets that are already substantially sorted efficiently.The time complexity is O(nk) when each element is at most k placesaway from its sorted position.

- It’s stable: it doesn’t change the order of elements with equal keys.

- It’s in-place: it only requires a constant amount of additional memory.

- It has good branch prediction characteristics,typically limited to a single misprediction per key.

Selection sort

In practice, selection sort generally performs worse than insertion sort.

- It doesn’t adapt to data and always performs a quadratic number of comparisons.

- However, it moves each element at most once.

Further reading

See Optimized quicksort algorithm explainedfor a fast Quicksort implementation that uses Insertion sort to improve performance.

Share this page: